Blog

From Helpers to Doers: What Production-Grade LLM Development Actually Looks Like

That change forces a different way of working with LLMs, one that looks far less like “vibe coding” and far more like disciplined engineering.

Root team

The Root team

Published :

Mar 3, 2026

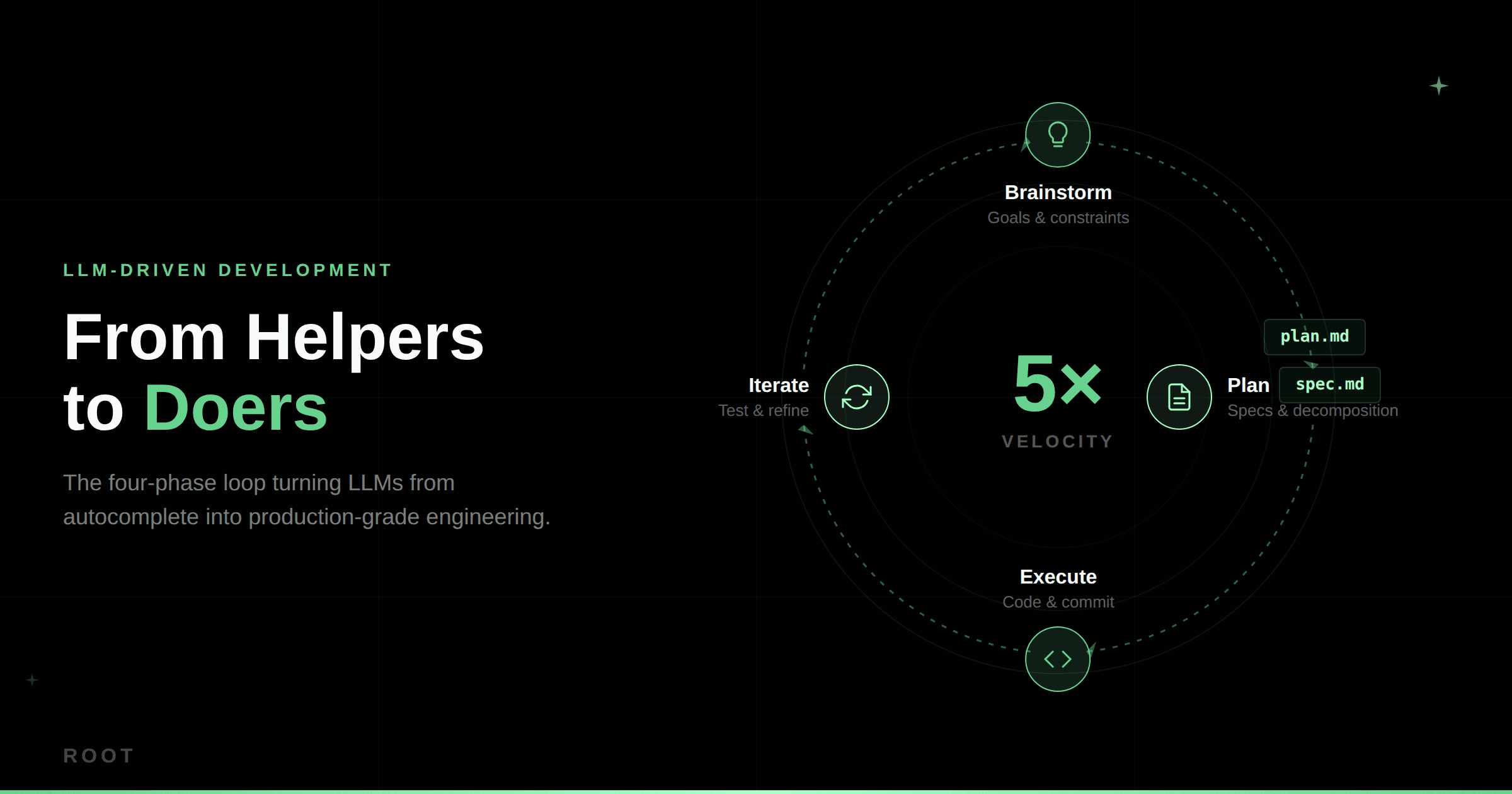

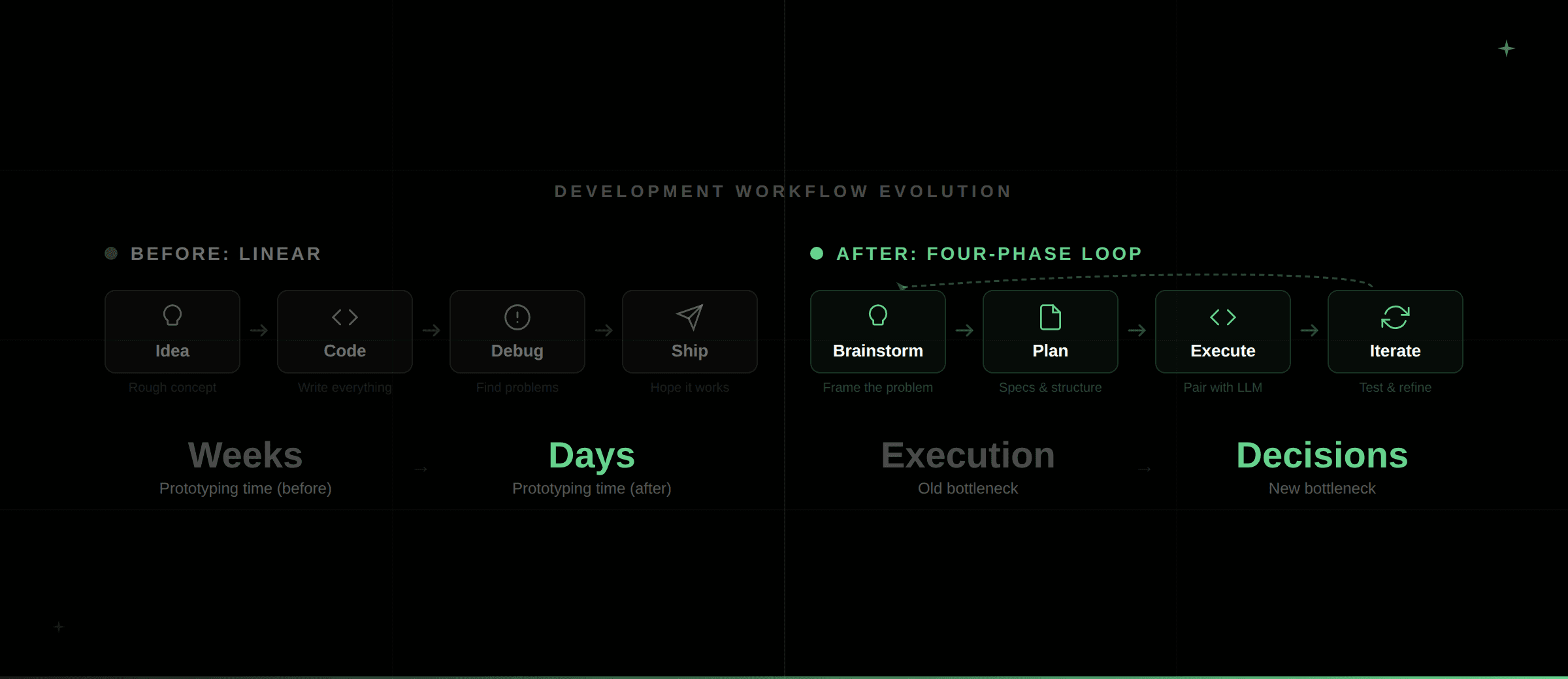

Large language models have crossed a line. They are no longer just assisting engineers with autocomplete or boilerplate. In real production environments, they are now capable of planning systems, writing full subsystems, debugging failures, and generating tests. When used deliberately, teams are seeing development velocity increase by as much as fivefold. Work that once took weeks now takes days, and work that took days often fits into a single afternoon.

The interesting part is not raw speed. The real shift is where the bottleneck has moved. Execution is no longer the slowest step. Deciding what to build next, how to structure it, and how to validate it has become the limiting factor. That change forces a different way of working with LLMs, one that looks far less like “vibe coding” and far more like disciplined engineering.

As a small, agile startup, Root.io was able to institutionalize this transition early. Short feedback loops, fewer legacy constraints, and a culture of experimentation made it easier to move fast and adapt workflows around LLMs. For many larger organizations, the starting point is far less obvious. The longer adoption is delayed, the harder it becomes to catch up, as tooling, expectations, and development norms continue to evolve at a rapid pace.

This post dives into how teams can get on the LLM-driven development train before the gap widens further, focusing on practical workflows, guardrails, and decision points rather than abstract promises or tooling hype.

LLMs as More than Copilots: The Four-Phase Loop

Production workflows settle into four phases that repeat: brainstorm, plan, execute, iterate.

Brainstorm

Brainstorming is intentionally loose. Engineers start with a rough idea of what they want to build and why. LLMs are used to draft a high-level brief that captures goals, constraints, and non-requirements. The importance of this phase is about framing, not correctness.

Plan

Planning is where most of the leverage comes from. Qualitative ideas are turned into concrete specifications written in Markdown. Instead of asking the model to immediately produce code, it is instructed to ask questions one at a time. This is the opportunity for assumptions to be challenged, edge cases to be surfaced, and tradeoffs to be made explicit. Chain-of-reasoning prompts force the model to decompose the problem step by step.

Two artifacts come out of this phase:

A plan.md file describes how the work will be broken down and sequenced.

A spec.md file defines each module in terms of inputs, outputs, constraints, and test expectations.

The clarity of these documents directly determines how smooth execution will be.

Execute

Execution happens in Cursor or Claude Code. The specs are loaded in full, and work proceeds one module at a time. The model generates code, while the engineer interrogates it.

Why was this approach chosen?

What assumptions are baked in?

Can it be simpler?

With this in mind, the code is reduced, edited, and committed, where frequent Git checkpoints make it easy to recover when a direction turns out to be wrong.

Iterate

Iteration is the phase where the loop is closed. Tests are run, failures are debugged, and refactoring happens continuously. The model is used to reason through errors step by step, but nothing is accepted until it passes locally and makes sense to a human reviewer.

This loop repeats quickly, and the idea is for the velocity gains to come from removing friction between thinking and execution, not by skipping validation.

Planning as Leverage

Most teams skip proper planning and pay for it later in bloat, confusion, and rewrites. This mirrors the old pattern of skipping threat modeling in the SDLC and discovering security issues in production.

The planning phase works because it converts the LLM from a code generator into a design reviewer. When you force it to ask questions one by one, it surfaces gaps that would normally only appear after implementation. You end up with specs that are testable, unambiguous, and grounded in actual constraints rather than wishful thinking.

By the time you start execution, the hard decisions are made. The model isn't guessing what you meant anymore. It's implementing something concrete.

Execution + Testing = Control with Quality

In practice, execution is a tight pair-programming loop, where tools like Cursor will generate code, and the engineer immediately asks for an explanation in plain English. Whatever feels overbuilt is trimmed, and anything unclear is rewritten.

LLMs tend to overproduce and therefore reduction is critical at this stage, for example LLMs may at times recreate entire libraries instead of using existing ones. If this is not caught early, the result is code that is technically functional but operationally toxic, as the age-old adage goes - writing the code is the easy part, it’s the maintenance that’s the hard part.

Test-driven development isn't optional in this workflow, it's the main quality indicator. When the LLM generates weak tests, the implementation is almost always weak too.

A good practice is to generate tests before code. When something fails, paste the error and the test into context, then ask the model to walk through what broke and why. Claude is particularly good at explaining failure states and tracing causality back through the logic. OpenAI models tend to be better at proposing specific, targeted fixes that address the root cause.

Regardless of which model you use, every fix needs to be validated locally before it's accepted. During debugging, ground the model in real data rather than synthetic examples. Connect it to actual logs, real database states, real error messages with real stack traces. In some cases, you can instruct the LLM to spin up its own local tests and verify outputs against actual behavior rather than asking you to do it manually.

This dramatically reduces hallucinations. When models work with real structures instead of synthetic examples, they stop inventing APIs that don't exist or assuming fields that were never defined. The difference between debugging with real data versus imagined data is substantial.

Scaling to Large Codebases

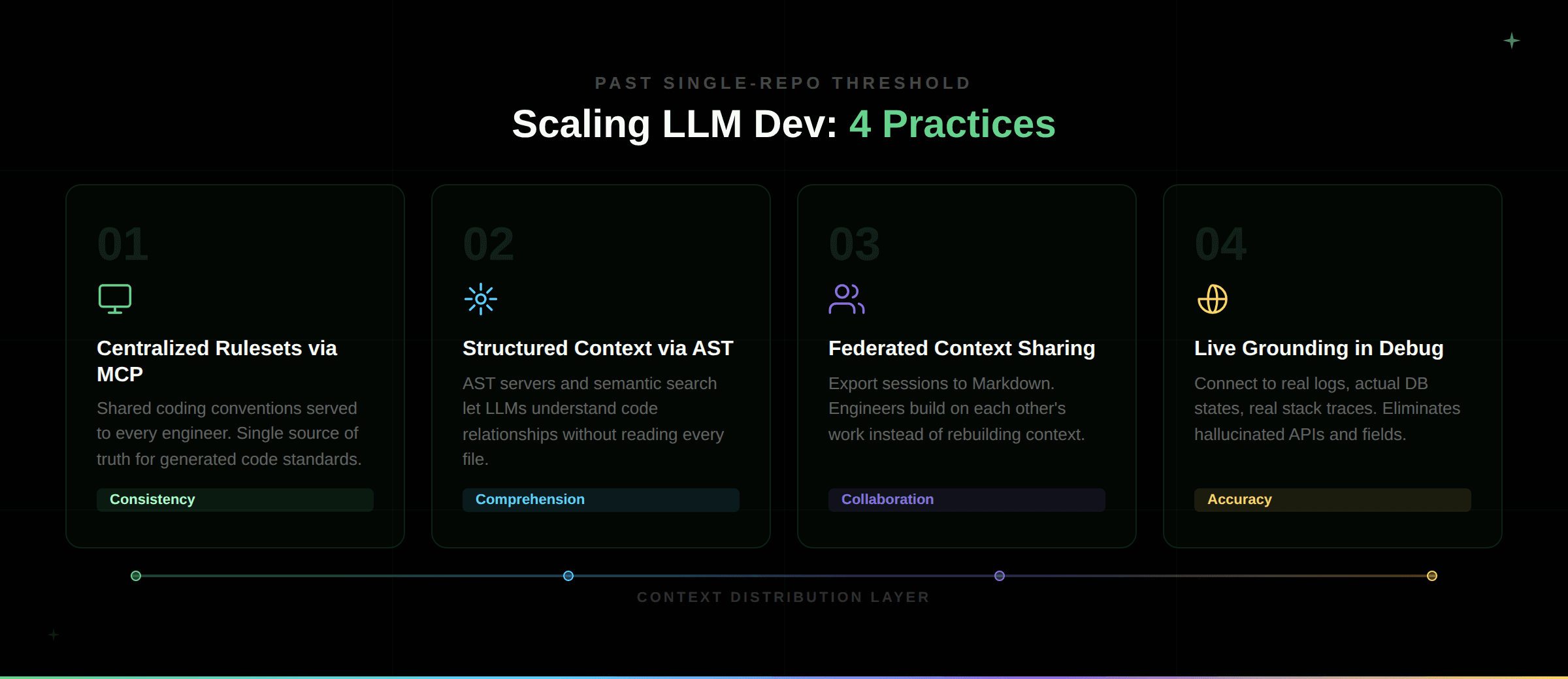

The practices described so far: structured specs, pair programming loops, test-first development, live grounding, work well when you're operating within a single repository or a handful of related modules. But once you're working across multiple repositories, microservices architectures, and distributed teams, the context problem becomes acute. You can't dump six codebases into a prompt. Engineers duplicate work because they don't know what their teammates' LLM sessions already figured out. Models lose track of system boundaries and start making assumptions about interfaces they can't see.

Scaling LLM-driven development past the single-repo threshold requires four practices that explicitly solve the context distribution problem.

Centralized rulesets served via MCP servers. Store coding conventions as Cursor and Claude rules in a shared repository, then serve them through an MCP server to every engineer. This ensures consistent outputs. Many of these rules were originally generated by LLMs themselves, distilled from existing code and refined over time. The ruleset encodes things like "always use this logging format" or "never implement custom retry logic when library X exists." It becomes a single source of truth that keeps generated code aligned with standards.

Structured context through AST servers and search infrastructure. Abstract syntax tree servers let LLMs understand code relationships without reading every file character by character. Elasticsearch indices enable semantic search across massive codebases. Some teams use graph databases like Neo4j to map dependencies and call chains. The key is giving models structured representations of code rather than dumping raw source files and hoping they figure it out.

Federated context sharing through exported sessions. Periodically dump Cursor sessions to Markdown and store them centrally. This creates recovery points and, more importantly, lets engineers build on each other's work. What one session learned about a subsystem gets loaded by another engineer tackling a related module. Over time, this pools understanding across the organization instead of forcing everyone to rebuild context independently.

Live grounding during debugging. Connect models to actual logs and real database states, not synthetic examples. Paste real error messages with real stack traces. Instruct the LLM to spin up local tests and verify outputs against actual behavior. When models can query actual schemas and real endpoints, they stop hallucinating fields that don't exist.

These four practices are what separate LLM-driven development that works at scale from proof-of-concept demos that collapse under production load.

The Engineer's Role Shifts

The engineer's role is shifting from writing every line of code to the architectural decisions, assumption validation, and quality enforcement - that are becoming more critical in an LLM-driven world. The shift is basically from mechanical execution to continuous judgment.

Engineers stop spending hours typing boilerplate or debugging syntax errors. Instead, they spend time on problems that actually require human insight: does this architecture make sense for our constraints? Are we solving the right problem? What are the failure modes we haven't considered? These are questions that LLMs can't answer on their own, no matter how fast they generate code.

The result is faster iteration with tighter feedback loops. The gap between deciding what to build and seeing it work compresses dramatically. Work that used to take a week to prototype now takes an afternoon. That compression doesn't just save time - it changes what kinds of experiments become feasible, what kinds of technical debt can be addressed, and how quickly teams can respond to changing requirements.

The real win isn't speed just to optimize vanity metrics. It's that engineers can spend their time on vision and strategy rather than grinding through implementation details. At this point, execution is no longer the bottleneck - deciding what's worth building next is.

Continue Reading