Blog

How Root's patching pipeline responds to supply chain attacks

Most mature platforms scan for malware somewhere. Every patch workflow in Root's pipeline — OS package or application library — runs two malware scans.

Benji Kalman

VP of Engineering, Co-Founder

Published :

May 1, 2026

Supply chain attacks are not a new category, but the threat shape has changed. axios. TeamPCP. A run of incidents where legitimate packages, from legitimate maintainers, carried malicious payloads through legitimate distribution channels — no typosquatting, no obvious signals, just trusted software turning untrustworthy for a window of hours.

Most mature platforms scan for malware somewhere. The interesting question is where in the pipeline the scan runs, what it covers, and what the system does when the compromised package hasn't been assigned a CVE yet.

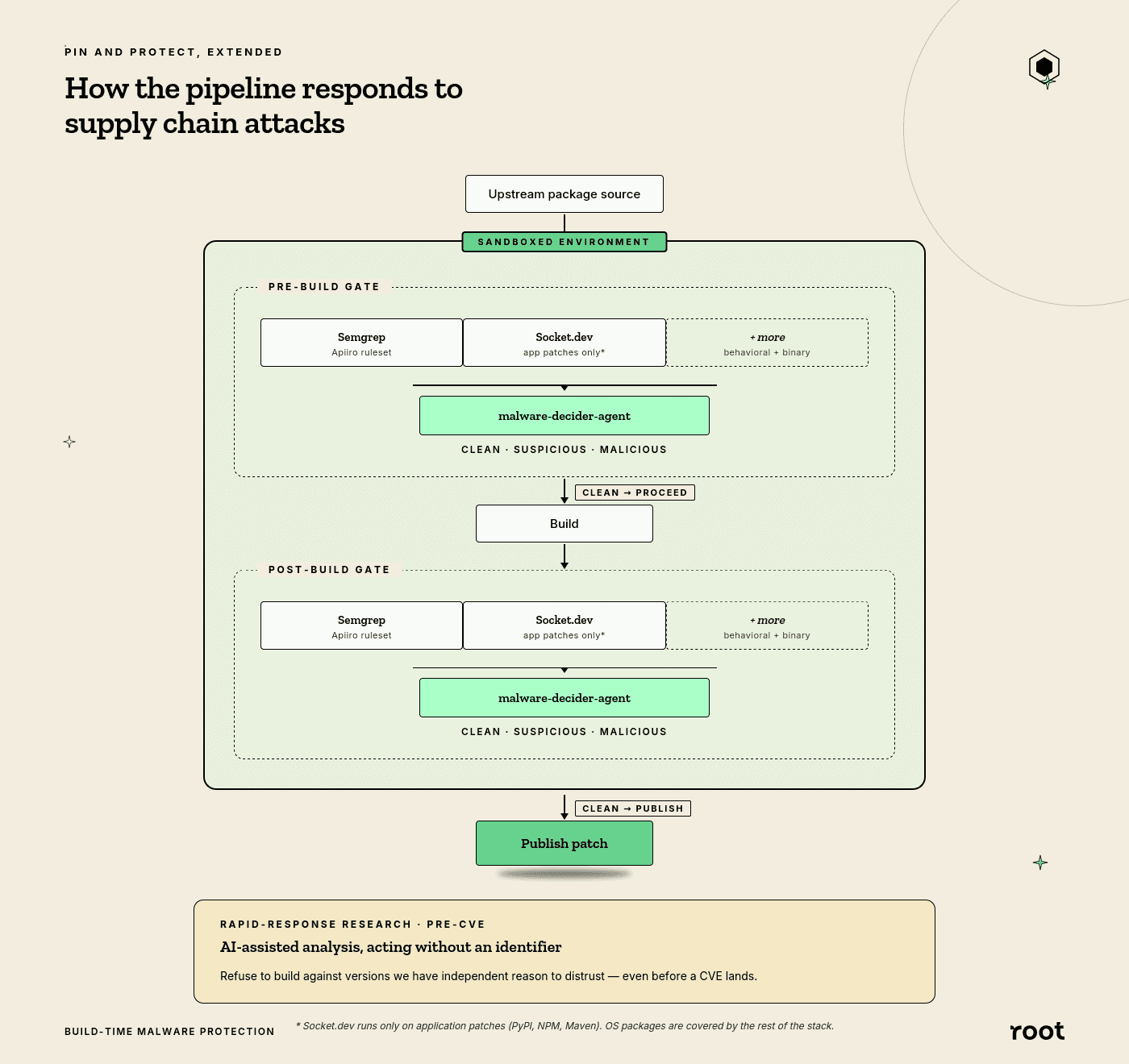

Two gates in the patch pipeline

Every patch workflow in Root's pipeline — OS package or application library — runs two malware scans. The pre-build scan runs on source pulled from upstream, catching compromised versions sitting in the registry. The post-build scan runs on the artifact before publication, catching build-time injection from compilation, dependency resolution, or post-install hooks.

Source-only gates miss build-time injection. Artifact-only gates can miss packages that were malicious going in. Running both closes the window between them.

Under each gate, three scanners run in parallel. Semgrep handles static analysis with Apiiro's malicious-code ruleset plus supply-chain rules. Socket.dev adds package-level detection on application patches (PyPI, NPM, Maven). Behavioral and binary analysis rounds out the stack. Socket doesn't cover OS packages — for those, the other layers carry the signal.

Cross-correlation, not raw tool output

Running three scanners in parallel produces a lot of noise. Individually, each tool has a nontrivial false-positive rate — a finding from any one of them, in isolation, is something you want a human to look at, not something you want to block a build on automatically.

So we don't block on raw tool output. An AI adjudication agent — malware-decider-agent — cross-correlates findings across the three scanners and produces a single verdict. MALICIOUS blocks the build. SUSPICIOUS logs and continues. CLEAN passes through. The agent also suppresses predictable false positives: findings in test files, documentation, READMEs, and cert test data, where malware-like patterns are expected and harmless.

The scanners are commodity. The ability to reason across three streams of noisy output — and fail closed on real threats without failing closed on every binary with a base64 string in a unit test — is the engineering.

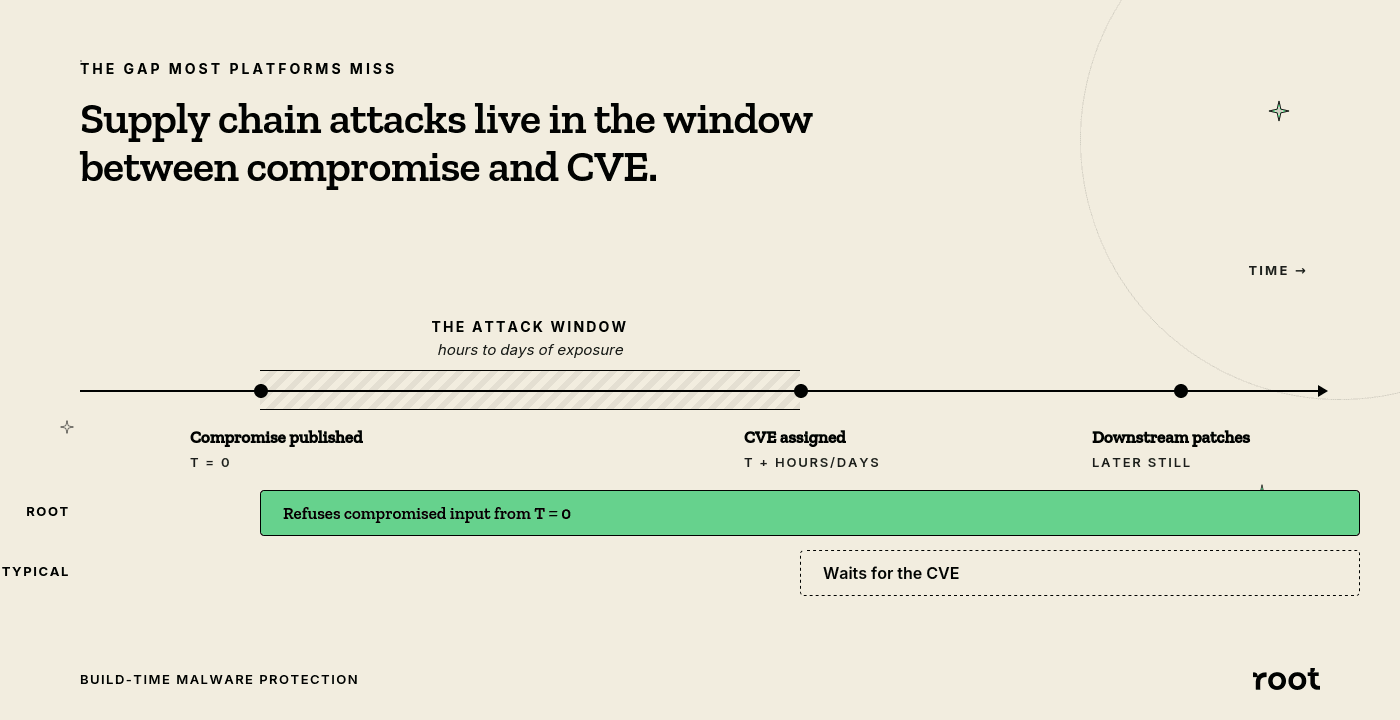

Before the CVE: acting without an identifier

The axios case highlights a structural gap in how security platforms normally operate. A compromised version gets published. For some window — hours, sometimes days — that version is live in the registry, being pulled and built against. No CVE has been assigned yet. Most platforms are waiting for an identifier before they can act.

The plan is to not wait.

Our rapid-response approach is built around AI-assisted analysis: feeding a package name and version into a language model, pulling the source, analyzing the diff against prior versions, looking for the shape of a supply chain attack. Not "does this match a signature" but "does this package look like it just got taken over."

Signal from that research flows two places. Into the build pipeline as an additional input to the gate decision — we can refuse to build a patch against a version we have independent reason to distrust, even without a CVE. And back out to the community, for free: supply chain attacks are a shared problem, and the defensive signal shouldn't be a paywalled one.

The two layers solve different problems. The build gate is enforcement — it refuses to produce compromised output. The rapid-response workflow is early detection — it gets ahead of the CVE clock. Neither replaces the other.

The trust boundary, evolving

Root's patching engine has always sat at a single trust boundary: between upstream ecosystems and the artifacts customers actually pull. The engine's job has always been to refuse to produce compromised output. CVE patching was the first version of that job. Build-time malware scanning is the same job, adapted to a threat landscape where the thing customers need protection from isn't always a known vulnerability — sometimes it's a legitimate package that stopped being trustworthy yesterday.

The point isn't that the engine scans for malware. It's where it scans, how it adjudicates, and what it can do before the rest of the ecosystem has agreed there's a threat to act on.

Continue Reading